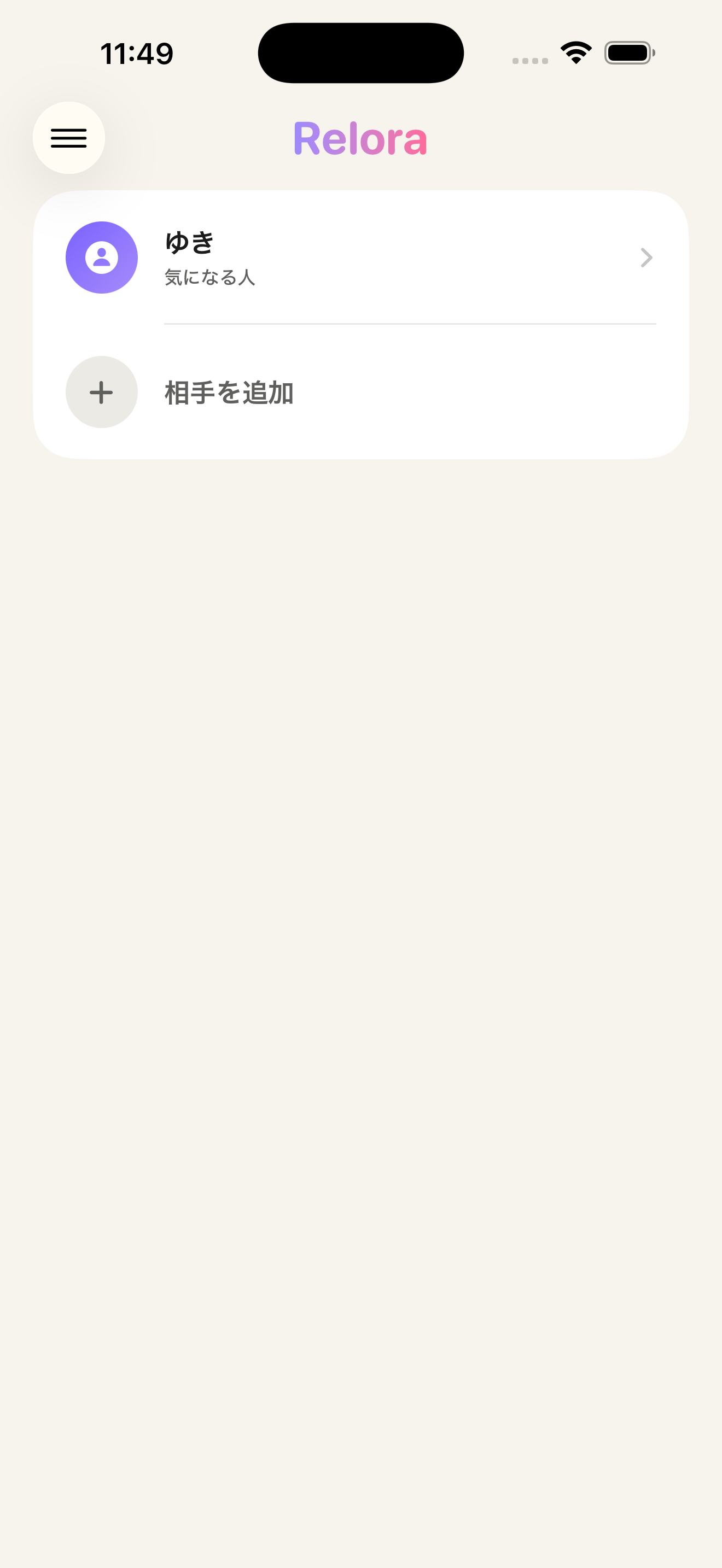

Relora

An AI that reads between the lines of love texts. OCR runs on the device, the psychological analysis runs on a cloud LLM.

Relora

Overview

Relora reads a single screenshot of a chat thread, reconstructs the conversation, and surfaces the emotional temperature and possible next moves. OCR is fully on-device via Apple Vision; analysis and reply suggestions run on Amazon Bedrock with Qwen3 or Claude Sonnet 4.6.

The problem

Stepping back from a chat with someone you care about is hard, and there are not many people you can ask. At the same time, pasting a raw chat history into a cloud AI feels deeply uncomfortable.

Key features

- Reconstructs the conversation from a single screenshot

- On-device OCR via Apple Vision keeps the raw text local

- Surfaces relationship temperature and risk points in one view

- Generates multiple reply candidates tailored to the situation

- Free / Pro tiers powered by StoreKit 2

Tech stack

Architecture

The iOS app uses SwiftUI with MVVM and SwiftData for persistence. Images are OCR-processed on-device with Apple Vision, and only the text is sent through API Gateway + Lambda to Amazon Bedrock. Infrastructure is defined in AWS CDK (TypeScript); Cognito gates access and Bedrock Guardrails contain prompt safety.

How AI is used

On Bedrock, the app routes between Qwen3 Next 80B (free tier) and Claude Sonnet 4.6 (paid tier), holding analysis quality steady with a psychology-grounded system prompt and per-language guidance. Prompt templates ship as a Lambda Layer.

Evaluation & Operations

Since launch we've watched Cognito request patterns and Bedrock latency. Prompt and Guardrails tuning is driven by App Store reviews and support requests.